Are skeptic’s really bad at making their case. Or are warming alarmists purposely avoiding the skeptic’s best arguments? That’s the question I am left with after reading this Scientific American article supposedly shooting down the skeptic’s best 7 arguments. Let’s walk very briefly through all seven. If you don’t want to go through these individually, I will preview the ending or you can skip to it: None of these seven include any of the most powerful or central arguments of skeptics. At the end of this article I offer seven competing skeptics claims that never seem to get addressed.

Claim 1: Anthropogenic CO2 can’t be changing climate, because CO2 is only a trace gas in the atmosphere and the amount produced by humans is dwarfed by the amount from volcanoes and other natural sources. Water vapor is by far the most important greenhouse gas, so changes in CO2 are irrelevant.

I have never really relied on this argument, so I am not going to bother with this one.

Claim 2: The alleged “hockey stick” graph of temperatures over the past 1,600 years has been disproved. It doesn’t even acknowledge the existence of a “medieval warm period” around 1000 A.D. that was hotter than today is. Therefore, global warming is a myth.

Without digging into the detail of proxies and statistical methods, it is nearly impossible to discuss the hockey stick in 3 paragraphs. But in the end it doesn’t matter because the author and I agree that it doesn’t matter. The author writes:

But hypothetically, even if the hockey stick was busted… what of it? The case for anthropogenic global warming originally came from studies of climate mechanics, not from reconstructions of past temperatures seeking a cause. Warnings about current warming trends came out years before Mann’s hockey stick graph. Even if the world were incontrovertibly warmer 1,000 years ago, it would not change the fact that the recent rapid rise in CO2 explains the current episode of warming more credibly than any natural factor does—and that no natural factor seems poised to offset further warming in the years ahead.

Leaving off the very end, where he goes sailing into the aether by saying incontrovertibly that the rise in CO2 explains our current episode of warming, he says that the hockey stick isn’t really evidence at all, no matter what it says. I agree. But skeptics weren’t the ones who brought it up as relevant evidence, the alarmists did. If they are walking away from it, fine. [By the way, this is an absolutely core technique of climate science – defend a flawed analysis like a mother bear, claim it is the smoking gun that proves everything, and then when forced to finally accept that it is flawed say that it doesn’t matter.]

Claim 3: Global warming stopped a decade ago; the earth has been cooling since then.

His answer here is really an amazing bit of cognitive dissonance. He writes:

Anyone with even a glancing familiarity with statistics should be able to spot the weaknesses of that argument. Given the extended duration of the warming trend, the expected (and observed) variations in the rate of increase and the range of uncertainties in the temperature measurements and forecasts, a decade’s worth of mild interruption is too small a deviation to prove a break in the pattern, climatologists say….

If a lull in global warming continues for another decade, would that vindicate the contrarians’ case? Not necessarily, because climate is complex.

So even a 20 year lack of warming does not disprove that CO2 is causing 0.2C – 0.25C per decade of warming or more, because natural variations could mask this or offset it somehow (offsetting therefore as much as 0.5C by natural variation in the cooling direction over two decades).

Some might ask, can’t the warming be hiding or taking some time off? First, if the theory is right, it can’t be taking time off. It has to warm, year in and year out. It can hide in the deep oceans, but new technologies like the ARGO floats since 2002 have shown no increase in ocean heat content in the 6-7 years. This is why scientists are stuck positing there is some natural phenomenon offsetting the heating with cooling.

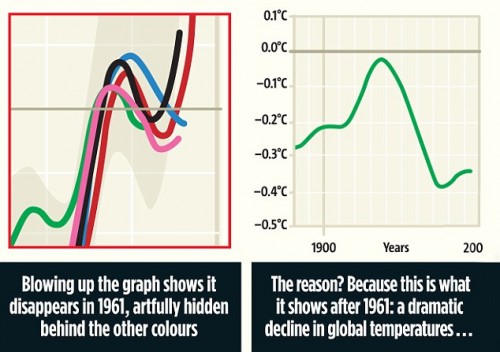

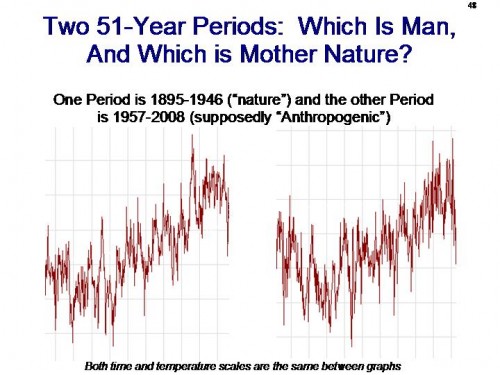

But here is the problem, not that any warming scientists are honest enough to raise it. Their entire argument that recent warming has been driven by CO2 (as the author confidently asserted above) is that scientists are unable to explain the warming since 1950 any other way (ie it can’t be explained by natural factors). Leave aside that this assertion is based solely on runs of their flawed models – we will get to that later. Look at the temperature curve for the past decades:

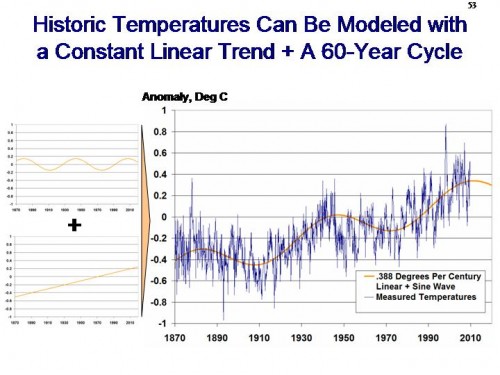

From this we see two things. First, warming since 1950 really means warming since about 1975-1980, since there was a flat period before that. And, this warming over the two decades of the 80’s and 90’s was between 0.4 and 0.5C.

So, do you see the problem? The entire foundation of global warming alarmism is based on the fact that their computer models can’t imagine anything natural that would warm things by as much as 0.4-0.5C over two decades. But now, to save the theory, they are positing that there are natural cooling effects that will offset 0.4-0.5C over two decades. Either natural effects can move temperatures a half degree over two decades or they can’t (by the way, if you want a hint, look at 1910-1940, where temperatures moved 0.6C over three decades long before man put much CO2 in the air.)

Claim 4: The sun or cosmic rays are much more likely to be the real causes of global warming. After all, Mars is warming up, too.

A couple of issues here. First, here is a great example of assuming your conclusion.

The IPCC notes that between 1750 and 2005, the radiative forcing from the sun increased by 0.12 watts/square-meter—less than a tenth of the net forcings from human activities [pdf] (1.6 W/m2).

Skeptic’s think that the net forcing numbers from human activities are over-stated, mainly due to over-assumptions of positive feedback effects. Rather than address this issue, he just assumes the forcing number is right and then says this “proves” skeptics are wrong.

He goes on to say that Svensmark’s cosmic ray theory of cloud formation is not well proven. I would agree with him that it is a formative theory and needs a lot more evidence and authentication before I am ready to say it represents how the world works. Which is one reason you will see me sometimes reporting on updates on his theory but you won’t find it in my core arguments on the topic.

But the interesting thing to me is that all the arguments the author makes against Svensmark could equally well apply to anthropogenic greenhouse gas warming theories. Both have been demonstrated in the laboratory, but it is unclear how either works in the complex climate system. Both have a few correlations going for it historically, but no smoking gun of causation. It is interesting the asymmetry of skepticism applied to Svensmark’s evidence vs. that of CO2 warming.

Claim 5: Climatologists conspire to hide the truth about global warming by locking away their data. Their so-called “consensus” on global warming is scientifically irrelevant because science isn’t settled by popularity.

*Shrug* Ad hominem argument, don’t really care. I have tried to be careful in all the CRU email flap to be clear that the substantial failures of scientific process don’t prove or disprove anything – they just mean that the science is not as settled as has been portrayed and that we need more transparency to let the evidence get battle-tested. I personally think a lot of it will collapse, but we actually have to still disprove it — just because it came from unethical folks does not make it wrong, any more than guys who took money from Exxon 20 years ago are automatically wrong either.

Claim 6: Climatologists have a vested interest in raising the alarm because it brings them money and prestige.

I actually think the author is naive or disingenuous to try to argue against this. Twenty years ago, climate science was a backwater with no money and no prestige. Now governments of the world spend billions, and Presidents know their names. Just the fact that average people know the names James Hansen and Phil Jones and Michael Mann disproves the authors point.

However, I am more than happy to totally leave this point behind and just forget about it, if I am allowed just one playground rejoinder – you guys started it. I am wondering why this argument was OK for years when it was skeptics and a few thousands of Exxon’s money but is now totally irrelevant when the alarmists are getting most of the funding, and the money runs up into the billions. But, as I said, if we want to declare a truce on ad hominem funding arguments, fine by me.

Claim 7: Technological fixes, such as inventing energy sources that don’t produce CO2 or geoengineering the climate, would be more affordable, prudent ways to address climate change than reducing our carbon footprint.

I am not a big fan of geoengineering climate, any more than I am of micromanaging economics. The same problems apply — where systems are complex and chaotic, the potential for unintended consequences are high.

Here is what he left out

So now its my turn. I will propose my own seven skeptic’s claims that are much more at the heart of our argument but which you never, ever see addressed in these type articles. By the way, if you think I am somehow moving the bar, see my climate speech, published before the Scientic American article, which highlights the claims below. Or see Richard Lindzen’s excellent summary article in the WSJ.

Claim A: Nearly every scientist, skeptic and alarmist alike, agree that the first order warming from CO2 is small. Catastrophic forecasts that demand immediate government action are based on a second theory that the climate temperature system is dominated by positive feedback. There is little understanding of these feedbacks, at least in their net effect, and no basis for assuming feedbacks in a long-term stable system are strongly net positive. As a note, the claim is that the net feedbacks are not positive, so demonstration of single one-off positive feedbacks, like ice albedo, are not sufficient to disprove this claim. In particular, the role of the water cycle and cloud formation are very much in dispute.

Claim B: At no point have climate scientists ever reconciled the claims of the dendroclimatologists like Michael Mann that world temperatures were incredibly stable for thousands of years before man burned fossil fuels with the claim that the climate system is driven by very high net positive feedbacks. There is nothing in the feedback assumptions that applies uniquely to CO2 forcing, so these feedbacks, if they exist today, should have existed in the past and almost certainly have made temperatures highly variable, if not unstable.

Claim C: On its face, the climate model assumptions (including high positive feedbacks) of substantial warming from small changes in CO2 are inconsistent with relatively modest past warming. Scientists use what is essentially an arbitrary plug variable to handle this, assuming anthropogenic aerosols have historically masked what would be higher past warming levels. The arbitrariness of the plug is obvious given that most models include a cooling effect of aerosols in direct proportion to their warming effect from CO2, two phenomenon that should not be linked in nature, but are linked if modelers are trying to force their climate models to balance. Further, since aerosols are short lived and only cover about 10% of the globe’s surface in any volume, nearly heroic levels of cooling effects must be assumed, since it takes 10C of cooling from the 10% area of effect to get 1C cooling in the global averages.

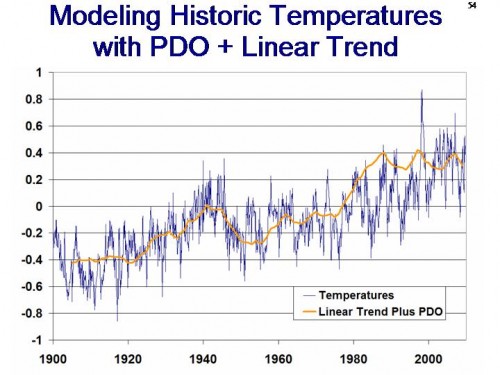

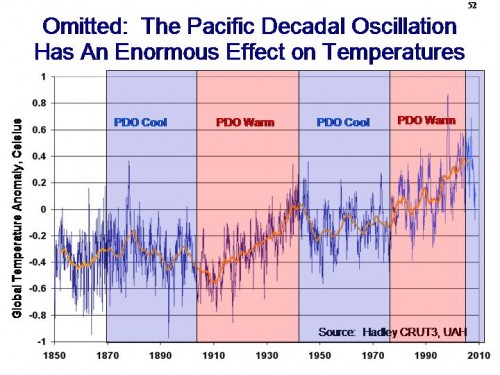

Claim D: The key issue is the effect of CO2 vs. other effects in the complex climate system. We know CO2 causes some warming in a lab, but how much on the real earth? The main evidence climate scientists have is that their climate models are unable to replicate the warming from 1975-1998 without the use of man-made CO2 — in other words, they claim their models are unable to replicate the warming with natural factors alone. But these models are not anywhere near good enough to be relied on for this conclusion, particularly since they admittedly leave out any number of natural factors, such as ocean cycles and longer term cycles like the one that drove the little ice age, and admit to not understanding many others, such as cloud formation.

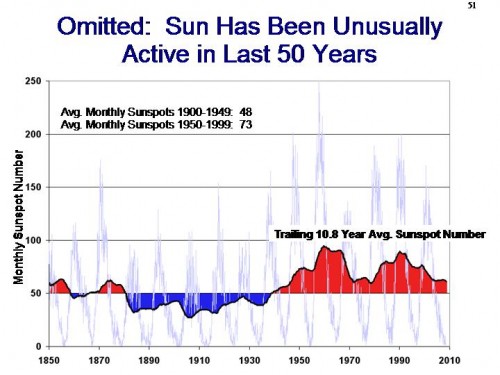

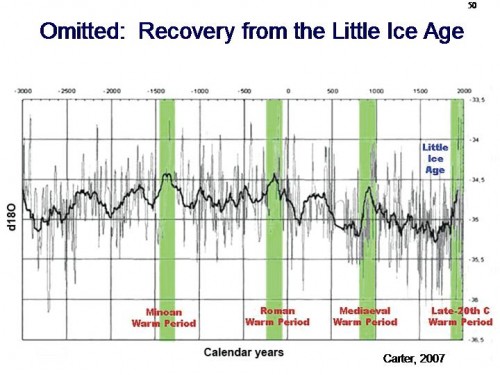

Claim E: There are multiple alternate explanations for the 1975-1998 warming other than manmade CO2. All likely contributed (along with CO2) but it there is no evidence to give most of the blame to Co2. Other factors include ocean cycles (this corresponded to a PDO warm phase), the sun (this corresponded to the most intense period of the sun in the last 100 years), mankind’s land use changes (driving both urban heating effects as well as rural changes with alterations in land use), and a continuing recovery from the Little Ice Age, perhaps the coldest period in the last 5000 years.

Claim F: Climate scientists claim that the .4-.5C warming from 1975-1998 cannot have been caused natural variations. This has never been reconciled with the fact that the 0.6C warming from 1910 to 1940 was almost certainly due mostly to natural forces. Also, the claim that natural forcings could not have caused a 0.2C per decade warming in the 80’s and 90’s cannot be reconciled with the the current claimed natural “masking” of anthropogenic warming that must be on the order of 0.2C per decade.

Claim G: Climate scientists are embarrassing themselves in the use of the word “climate change.” First, the only mechanism ever expressed for CO2 to change climate is via warming. If there is no warming, then CO2 can’t be causing climate change by any mechanism anyone has ever suggested. So saying that “climate change is accelerating” (just Google it) when warming has stopped is disingenuous, and a false marketing effort to try to keep the alarm ringing. Second, the attempts by scientists who should know better to identify weather events at the tails of the normal distribution and claim that these are evidence of a shift in the mean of the distribution is ridiculous. There are no long term US trends in droughts or wet weather, nor in global cyclonic activity, nor in US tornadoes. But every drought, hurricane, flood, or tornado is cited as evidence of accelerating climate change (see my ppt slide deck for the data). This is absurd.