Today’s release of the study, titled Global Climate Change Impacts in the United States, was overseen by a San Francisco-based media consulting company…

The nearly 200-page study was scrubbed of the usual scientific jargon, and was given a high-profile release by Obama’s science advisor, John Holdren, and the head of the National Oceanic and Atmospheric Administration (NOAA), Jane Lubchenco.

Wow, that’s sure how I learned to handle a scientific report back when I was studying physics – scrub it of the science and give it to an activist PR firm! Do you need any more evidence that climate science has become substantially dominated by post-modernist scientists, where ideological purity and staying on message is more important than actually having the science right?

I saw a draft of this report last year, but I am still trying to download this new version. I expect to be sickened. Here is a taste of where they are coming down:

If today’s generation fails to act to reduce the carbon emissions that cause global warming, climate models suggest temperatures could rise as much as 11F by the end of the century.

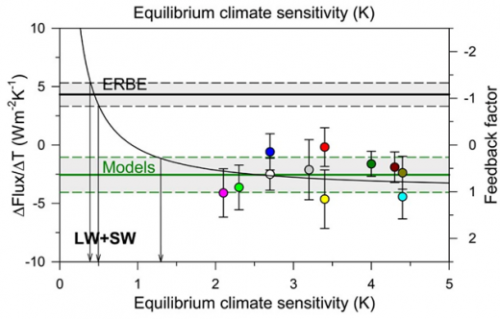

11F is about 6.1C. I don’t know if they get this by increasing the CO2 forecast or by increasing the sensitivity or both, but it is vastly higher than the forecasts even of the over-apocalyptic IPCC. I think one can fairly expect two things, though — 1) More than 2/3 of this warming will be due to positive feedback effects rather than Co2 acting alone and 2) There will be little or no discussion of the evidence that such positive feedback effects actually dominate the climate.

Apparently the report will make up for having all the science stripped out by spending a lot of time on gaudy worst case scenarios:

That translates into catastrophic consequences for human health and the economy such as more ferocious hurricanes in coastal regions – in the Pacific as well as the Atlantic, punishing droughts to the south-west, and increasingly severe winter storms in the north-east and around the Great Lakes.

The majority of North Carolina’s beaches would be swallowed up by the sea. New England’s long and snowy winters might be cut short to as little as two weeks. Summers in Chicago could be a time of repeated deadly heat waves. Los Angelenos and residents of other big cities will be choking because of deteriorating air quality.

Future generations could face potential food shortages because of declining wheat and corn yields in the breadbasket of the mid-west, increased outbreaks of food poisoning and the spread of epidemic diseases.

This strikes me as roughly equivilent to turning in a copy of Lucifer’s Hammer in response to a request for a scientific study of the physics of comets.