I wouldn’t say that I am a total sun hawk, meaning that I believe the sun and natural trends are 100% to blame for global warming. I don’t think it unreasonable to posit that once all the natural effects are unwound, man-made CO2 may be contributing a 0.5-1.0C a century trend (note this is far below alarmist forecasts).

But the sun almost had to be an important fact in late 20th century warming. Previously, I have shown this chart of sunspot activity over the last century, demonstrating a much higher level of solar activity in the second half than the first (the 10.8 year moving average was selected as the average length of a 20th century sunspot cycle).

Alec Rawls has an interesting point to make about how folks are considering the sun’s effect on climate:

Over and over again the alarmists claim that late 20th century warming can’t be caused by the solar-magnetic effects because there was no upward trend in solar activity between 1975 and 2000, when temperatures were rising. As Lockwood and Fröhlich put it last year:

Since about 1985,… the cosmic ray count [inversely related to solar activity] had been increasing, which should have led to a temperature fall if the theory is correct – instead, the Earth has been warming. … This should settle the debate.

Morons. It is the levels of solar activity and galactic cosmic radiation that matter, not whether they are going up or down. Solar activity jumped up to “grand maximum” levels in the 1940’s and stayed there (averaged across the 11 year solar cycles) until 2000. Solar activity doesn’t have to keep going up for warming to occur. Turn the gas burner under a pot of stew to high and the stew will heat. You don’t have to keep turning the flame up further and further to keep getting heating!

Update: A commenter argues that I am simplistic and immature in this post. I find this odd, I guess, for the following reason. One group tends to argue that the sun is largely irrelevant to the past century’s temperature increases. Another argues that the sun is the main or only driver. I argue that the evidence seems to point to it being a mix, with the sun explaining some but not all of the 20th century increase, and I am the one who is simplistic?

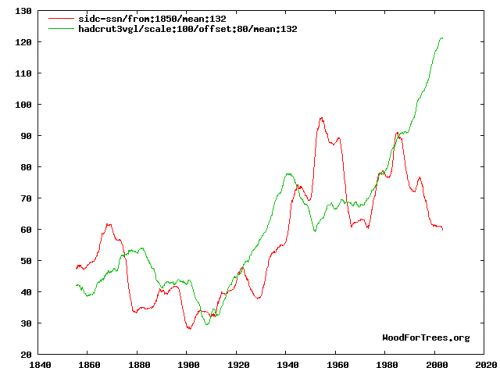

The commenter links to this graph, which I will include. It is a comparison of the Hadley CRUT3 global temperature index (green) and sunspot numbers (red):

Since I am so ridiculously immature, I guess I don’t trust myself to interpret this chart, but I would have happily used this chart myself had I had access to it originally. Its wildly dangerous to try to visually interpret data and data correlations, but I don’t think it is unreasonable to say that there might be a relationship between these two data sets. Certainly not 100%, but then again the same could easily be said of the relationship of temperature to Co2. The same type of inconsistencies the commenter points out in this correlation could easily be made for Co2 (e.g., why, if CO2 was increasing, and in fact accelerating, were temps in 1980 lower than 1940?

The answer, of course, is that climate is complicated. But I see nothing in this chart that is inconsistent with the hypothesis that the sun might have been responsible for half of the 20th century warming. And if Co2 is left with just 0.3-0.4C warming over the last century, it is a very tough road to get from past warming to sensitivities as high as 3C or greater. I have all along contended that Co2 will likely drive 0.5-1.0C warming over the next century, and see nothing in this chart that makes me want to change that prediction.

Update #2: I guess I must be bored tonight, because commenter Jennifer has inspired me to go beyond my usual policy of not mixing it up much in the comments section. A lengthy response to her criticism is here.

Lubos Motl posted an Nir Shaviv’s comments on the Lockwood & Frolich paper some time ago. I could not connect with Shaviv’s web site.

Lubos’ “the reference frame” link here:

http://motls.blogspot.com/2007/07/nir-shaviv-why-is-lockwood-and-frohlich.html

I have been researching for my brand new but not yet set up web site and one area of many is space.com which shows that since the 1970’s (accuracy confirmed by solar satelites which weren’t set up until the 1970’s) the suns output has risen by .05% per decade. granted thats not a large percentage but among other things it shows that Lockwood and Frolich are full of bull.

Lockwood and Frohlich would have made equal sense arguing that we are due for a warming trend over the next month and a half. Since the days are now growing longer, we should expect January to be warmer than December. Likewise we should expect July and August to be cooler than June.

So why isn’t the last 60 years called by it’s proper name “the modern solar maxima”. I guess it’s only to be used in hushed quarters.

I believe if we observe the current daylight length, and the speed at which it is receding daily that by summer we will be totally in the dark..

any chance of overlaying this chart with an index of ADO/PDO mode intensity? i suspect that putting those two metrics together might yield some interesting results.

I know this is off topic but thought it might be worthwhile.

I have sent these questions to Dr Chapman at CT.

Dr William Chapman,

Can you please explain a couple of things on the Cryosphere Today “Compare side-by-side images of Northern Hemisphere sea ice extent” product, please? Why does the snow in the more recent dates cover areas that were previously sea inlets, fjords, coastal sea areas, islands and rivers? (Water areas, most easily discernible in the River Ob inlet)

Why does the sea ice in the older images cover land areas? (Land areas, most easily discernible in River Ob inlet)

See this overlay:

http://i44.tinypic.com/330u63t.jpg

Looking forward to your answer,

Mike Bryant

Thanks for looking at this,

Mike Bryant

Sorry if I keep forwarding Michael Crichton as an authority, but if you read his speeches, you will see why he was invited to speak at many venues.

To summarize, there is now way that one thing (human produced CO2) is to blame for global warming. Assuming the planet even is warming. History shows us that sometime in the next few centuries, this planet is going to get damn cold.

This has to do with complexity. The earth is a very complex system. Reducing the human output of CO2 will not, in any way, have an effect on the temperature of the planet. It is only one part of the puzzle. What happens if CO2 is reduced to the level the enviro-nazis want and the temperature still goes up? Then what? Methane? How about water vapor?

Research systems complexity and you will see that whatever it is that humans do to “fix” the “problem” of “global warming” will just be a disaster.

Staggering. You obviously haven’t read a single scholarly paper on this, or even looked at the data. This graph should dispel all your wrong-headed thinking. It’s the temperature, and monthly sunspot numbers, both plotted as 11 year running means (and scaled so that they roughly align). How, exactly, did rising temperatures in 1920 trigger increased solar activity 10 years later? Why did a peak in solar activity in the 1950s not correspond to a rise in temperatures then? Why do the green line and the red line diverge so wildly after 1985? Why is there basically hardly any correlation between solar activity and temperatures, over the last 150 years?

You betray a great immaturity on this web page, regurgitating the same nonsense time and again, calling people ‘morons’ and never even having the courtesy to respond when people tell you you’re wrong. When a fellow denialist tells you you’ve misrepresented him, and you don’t even bother to reply, let alone correct your error, what becomes crystal clear is that you’re simply dishonest.

Also to Jennifer, and others…:

Besides the sunspot numbers I think the sun activity measuered as magnitic storms peaked during the 1990s. Thus there was also wery little intergalactic cosmic rays then.

Palle et al (who in another paper [1] describes a very strong correlation between CR and low level clouds) here shows that “a surface average forcing at the top of the atmosphere, coming only from changes in the albedo from 1994/1995 to 1999/2001, of 2.7+-1.4 W/m2 (Palle et al., 2003), while observations give 7.5+-2.4 W/m2. The Intergovernmental Panel on Climate Change (IPCC, 1995) argues for a comparably sized 2.4 W/m2 increase in forcing, which is attributed to

greenhouse gas forcing since 1850”.

Source: “The Earthshine Project: update on photometric and spectroscopic measurements”

http://solar.njit.edu/preprints/palle1266.pdf

This is according to comments from IPCC’s authors (e.g. Joyce Penner), which — no surprice — was omitted and not included in the final IPCC report.

—

[1]

The possible connection between ionization in the atmosphere by cosmic rays and low level clouds

http://www.arm.ac.uk/preprints/433.pdf

TooMuchTime has it exatly right. A few of the extinction events were most likely the result of global cooling and there is some evidence that all the extinction events have a possibility of direct consequence arising from global cooling irregardless of the cause!