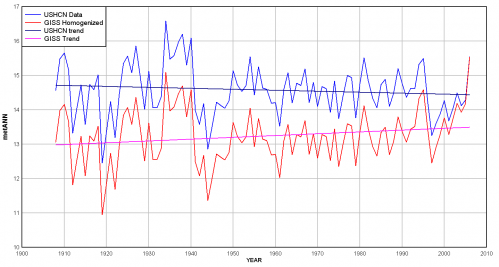

In particular, a few computers at NASA’s Goddard Institute seem to be having a disproportionate effect on global warming. Anthony Watt takes a cut at an analysis I have tried myself several times, comparing raw USHCN temperature data to the final adjusted values delivered from that data by the NASA computers. My attempt at this compared the USHCN adjusted to raw for the entire US:

Anthony Watt does this analysis from USHCN raw all the way through to the GISS adjusted number (the USHCN adjusts the number, and then the GISS adds their own adjustments on top of these adjustments). The result: 100%+ of the 20th century global warming signal comes from the adjustments. [Update: I was not very clear on this — this is merely an example for one single site — it is not for the USHCN or GISS index as a whole. This is merely an example of the low signal to noise ratio in much of the surface temperature record] There is actually a cooling signal in the raw data:

Now, I really, really don’t want to be misinterpreted on this, so a few notes are necessary:

- Many of the adjustments are quite necessary, such as time of observation adjustments, adjustments for changing equipment, and adjustments for changing site locations and/or urbanization. However, all of these adjustments are educated guesses. Some, like the time of observation adjustment, probably are decent guesses. Some, like site location adjustments, are terrible (as demonstrated at surfacestations.org).The point is that finding a temperature change signal over time with current technologies is a measurement subject to a lot of noise. We are looking for a signal on the order of magnitude of 0.5C where adjustments to individual raw instrument values might be 2-3C. It is a very low signal-noise environment, and one that is inherently subject to biases (researches who expect to find a lot of warming will, not surprisingly, adjust a lot of measurements higher).

- Warming has occurred in the 20th century. The exact number is unclear, but we have much better data via satellites now that have shown a warming trend since 1979, though that trend is lower than the one that results from surface temperature measurements with all these discretionary adjustments.

As an engineer, any time the adjustments reach the magnitude of the signal I get concerned. This is not to say adjustments are not reasonable.

I have been looking for a way to get at the raw and adjusted data. It might be pretty easy to come by but I haven’t found it yet. Can you tell me where the data for your graph came from and how you did your calcs?

As an engineer, any time the adjustments reach the magnitude of the signal I get concerned. This is not to say adjustments are not reasonable.

I have been looking for a way to get at the raw and adjusted data. It might be pretty easy to come by but I haven’t found it yet. Can you tell me where the data for your graph came from and how you did your calcs?

Yes, the climate ahs changed some in the past 100 years.

Yes, we ahve likely influenced that change some.

That does not mean that the predictions made about that change by the IPCC/Hansen complex is accurate or even at all useful.

This report, showing how the AGW crisis is a fabrication of AGW promoters, is merely the latest.

And remember: computers don’t lie, but liars use computers.

“The result: 100%+ of the 20th century global warming signal comes from the adjustments” – how often does it need to be said? The US is not the world. You can’t use US measurements to make any claims about global warming.

“The exact number is unclear, but we have much better data via satellites now that have shown a warming trend since 1979, though that trend is lower than the one that results from surface temperature measurements with all these discretionary adjustments.” – Here is a graph of the four main temperature measures, two from satellites and two from ground-based measurements. Without looking at they key, can you tell which ones are satellite and which ones are ground based?

I am not so sure about even the time of observation bias. I’ve looked into it an I am not at all certain how one takes min/max recorded at a given time of the day and “adjusts” it to what would have been measured at midnight. You are just creating data that is not there. Typically when a scientist discovers that he has not recorded data he needs, he goes back and makes another measurement. Lacking a time machine – we are simply stuck with the data we have, and need to develop analytical methods that are not sensitive to its deficiencies.

Fundamentally we need to develop ways to compare the past to the present that do not rely on these adjustments. I am also not at all sure what all this averaging (both spacially and in the time domain) accomplishes.

If you want to know if it’s warming, take a single station’s raw data and perform a regression – don’t average over months or years first – regressions are designed to handle noisy data. You’ll get a slope and an error estimate. Now do this for a lot of stations. Take all of the trends you’ve produced and report some aggregate statistics. For example you might be able to say that “90% of the stations in the US have experienced a statistically significant warming trend in the last 100 years, 50% of those experienced a trend of at least 1deg C”.

You also might be able to do some geographical weighting, though that could be problematic. But you get the idea. Take the individual station trends and just break them out by all of their attributes – rural vs urban, type of equipment, elevation, type of measurement (continuous, min/max), etc. Don’t attempt to average it all together into a single number based on the assumptions that your “adjustments” to control for station differences are somehow perfectly accurate.

“jennifer”-

in the chart you post, the GISS has approximately 2 times the linear warming trend of the UAH over the same period.

are you claiming that to be insignificant?

seems like a pretty significant difference to me…

“jennifer”-

in the chart you post, the GISS has approximately 2 times the linear warming trend of the UAH over the same period.

are you claiming that to be insignificant?

seems like a pretty significant difference to me…

“morganovich”: here are the trends in the four datasets, from 1979 to present. Which one looks anomalous to you?

“morganovich”: here are the trends in the four datasets, from 1979 to present. Which one looks anomalous to you?

In the 17th century Galileo, aware that sound has a finite speed, hypothesized by analogy that light also has a finite speed. He stood on one hilltop with a lantern while a partner stood on another hill some distance away. Galileo’s system was to hold a cloth to block his lantern, then swiftly move the cloth away. His partner would look for the lantern signal, then whip away the cloth in front of his own lantern. The time between whipping away his own lantern and seeing his partner’s lantern would be the time for the round trip plus the time for reflex action. After measuring the time lapse for one separation, his partner went to a hill much farther away. That longer time interval would equal the time for light to travel the longer distance plus the reflex time, which would be the same as for the shorter distance.

Galileo’s plan worked in theory, but needless to say, error measurement in reaction time overwhelmed the measurement of lightspeed. I suspect those trying to measure global temperature change are having the same problems as Galileo.

http://physicsworld.com/cws/article/print/3431

“Current models predict that, on a global average, the atmosphere absorbs about 65 W m-2, whereas observations from the top of the atmosphere and the Earth’s surface show that the actual absorption is 95 W m-2. This mismatch of some 30 W m-2 corresponds to about 10% of the globally averaged incoming solar radiation, suggesting that some extra anomalous absorption needs to be added to the models. ”

So the models are off by 30 watts while the expected effect of a doubling of CO2 would be 3.8 watts- A. McIntire

Alan,

Well said.

that graph comes out quite differently when i run it:

http://www.woodfortrees.org/plot/gistemp/from:1979/to:2009/normalise/trend/offset:0.03/plot/uah/normalise/trend/plot/hadcrut3vgl/from:1979/to:2009/normalise/trend/plot/rss/normalise/trend/offset:0.05

RSS has a somewhat steeper slope than UAH, but is 1/3 less steep than giss/had crut (which seem to be in pretty tight agreement.

since 2001, even hadcrut has pulled away from giss

http://www.woodfortrees.org/plot/gistemp/from:2001/to:2009/normalise/trend/offset:0.45/plot/uah/from:2001/to:2009/normalise/trend/offset:0.4/plot/hadcrut3vgl/from:2001/to:2009/normalise/trend/offset:0.29/plot/rss/from:2001/to:2009/offset/trend/normalise

that graph comes out quite differently when i run it:

http://www.woodfortrees.org/plot/gistemp/from:1979/to:2009/normalise/trend/offset:0.03/plot/uah/normalise/trend/plot/hadcrut3vgl/from:1979/to:2009/normalise/trend/plot/rss/normalise/trend/offset:0.05

RSS has a somewhat steeper slope than UAH, but is 1/3 less steep than giss/had crut (which seem to be in pretty tight agreement.

since 2001, even hadcrut has pulled away from giss

http://www.woodfortrees.org/plot/gistemp/from:2001/to:2009/normalise/trend/offset:0.45/plot/uah/from:2001/to:2009/normalise/trend/offset:0.4/plot/hadcrut3vgl/from:2001/to:2009/normalise/trend/offset:0.29/plot/rss/from:2001/to:2009/offset/trend/normalise

As a Ph.D analytical chemist who has actually attempted to measure the melting point of a pure substance to a tenth of a degree in a controlled lab setting with properly calibrated instrumentation, I think that it is crazy to think that global mean temperature can be measured to a similar accuracy. It is not possible to representatively sample the globle. It is not possible to assure instrumental calibration. Satellites have all sorts of other problems. It’s not possible to adjust for these problems. Anyone who says they can measure the temperature of the earth is nuts. You would be even more crazy to believe temperatures recorded earlier in the 20th century are reliable. You should be committed to a nut house if you think surrogate measures of temperature from tree rings are valid or that CO2 measurements from ice core bubbles are accurate and reliable. In my opinion, everyone, both advocates and skeptics, is merely pushing around dirty data sets and participating in group masterbation exercises. All of this modeling and restrospective data collection and analyses built on inaccurate and unreliable data is shameful. Did ANYONE engaged in this stuff go to school?

I concur with Ray as I work in a climate controlled lab.

However, satellite measurements, unlike near surface stations, can be calibrated and errors quantified. I have much more faith in satellite data.

For the record RSS uses climate models for their calibration 🙂

In reality it is ocean heat content that should be the metric used to measure whether the globe is retaining more or less heat. SST is a reflection of this.

One only look at the current PDO Index to understand the earth is likely transitioning to a long term period of cooling.

P.S. GISS and HadCRU are diverging from satellites.

I try hard to understand this stuff, but sometimes I am not up to it.

My current hangup is this: “Many of the adjustments are quite necessary, such as time of observation adjustments, …”

The readings recorded were (are?) max T and min T for the 24-hour period right?

Unless the TOO was at the time near where the max or the min occurred for the day, why does TOO matter?

The graph (second one) that you reposted from my website http://www.wattsupwiththat.com is misrepresented in the context.

It is NOT for all GISS data, only for a specific station, Santa Rosa, NM, which I recently surveyed. I was illustrating that this single station had a cooling trend, but that after the GISS homogenization adjustment had been applied, that cooling trend turned into a warming trend.

here is my original writeup:

http://wattsupwiththat.com/2008/12/08/how-not-to-measure-temperature-part-79-would-you-could-you-with-a-boat/

A correction should be posted.

“morganovich”: you’ve put a “normalise” in there. It’s not clear what that does, and you don’t need it. Trends from different datasets measuring the same thing on the same timescale can be directly compared. In fact, my link had a “normalise” left in accidentally, and a better graph is this one. Which measures look anomalous?

DG: “For the record RSS uses climate models for their calibration 🙂 ” – no they don’t.

“GISS and HadCRU are diverging from satellites.” – no they aren’t. See graphs above.

Larry Sheldon: Tmin probably comes shortly before sunrise. Often, temperature readings are not taken then, because it’s not convenient. If you took a measurement after sunrise, you wouldn’t measure Tmin. Similarly, Tmax is probably at about 3pm. If you took a measurement at 4pm, you wouldn’t measure Tmax. The time of observation thus needs to be corrected for. If there was a systematic change over time in the time observations were taken, this may introduce a spurious warming or cooling trend unless corrected for.

“If you took a measurement after sunrise, you wouldn’t measure Tmin. Similarly, Tmax is probably at about 3pm. If you took a measurement at 4pm, you wouldn’t measure Tmax. The time of observation thus needs to be corrected for. “

Probably? Are you certain? What other scientists are allowed to say “well, we didn’t measure at the time we wanted to, but the ‘real’ value was probably this”?

“If there was a systematic change over time in the time observations were taken, this may introduce a spurious warming or cooling trend unless corrected for.”

Let’s think it through. TOB arrises purely as an artifact of our odd insistence on using monthly averages to derive yearly averages. When one is recording min/max readings for a 24 hour period, and not taking the reading at midnight, you are likely to attribute a min or max to the incorrect month, at the month ends. For example, if you take a max reading of 80degF at 8am June 1, it’s most likely that that max actually occured in May. In this example May would appear warmer than it actually was. Different times of observation can result in the opposite bias, making May appear cooler and June appear warmer. This affect is most prominent when the temperatures are changing rapidly – spring and fall.

Now, you might suggest that we just not use monthly averages, but instead average over the entire year, and perhaps use boundaries that don’t include times when the temperature is changing rapidly to minimize any remaining effect. That would of course be the sensible solution, but we don’t do this.

Regardless, the effect averages out, as for any particular time of observation, the biases in the fall and spring are opposite, and on average, they cancel out. The one possible exception to this is if a station changes its time of observation mid-year. Suppose they have a time of observation that makes spring look cooler, and fall look warmer, but then switch in summer to a time of observation that makes spring look warmer and fall look cooler. Well then both fall and spring look cooler – for that year only.

So, if, magically the station decides to switch time of observation just right, you can have see an artificial cooling for that year’s record. Never mind the fact that such a switch can also lead to artificial warming if the opposite switch is made.

So Time of Observation bias, when time of observation is constant, averages out over a year to zero, and when changed in a year can only affect that single year’s annual average.

Now if perhaps, just perhaps, a bunch of stations made just the right change, at just the right time of year in the last few decades, this could result in an anomalous cooling when everything is averaged together. But, the effect would rapid diminish as all stations made the switch.

If one were to adjust for the effect, the adjustment would start at zero, rise to a peak value in the year in which the maximum number of stations switched, and then fall to zero again as the stations stabilize at the new time of observation. What do we see in Hansen el al 2001? We do start at an adjustment of near zero, but it rises to a peak and stays there, attaining a nearly constant value in the 1990s. Now I don’t know what the models behind this adjustment say about estimated time of observation switches, but that adjustment must fall back to zero eventually, as there are only so many stations that can make such a switch, eventually all of them will have switched. I eagerly await the reduction in TOB adjustments in Hansen el al 2011. 😉

Regardless, the entire exercise in adjusting for this bias is a farce. It amounts to creating data you just don’t have. The adjustment is based on mathematical models, not empirical data, thus has no basis in reality. It is also not based on actual observed changes in time of observation, it’s inferred from the data itself. Why not just average in such a way that minimizes the bias and avoid the issue entirely?

Regarding TOBS, this has had the crap beaten out of it at CA.

http://www.climateaudit.org/?p=2106

A highly recommended reference in that thread runs through some examples.

http://www.john-daly.com/tob/TOBSUM.HTM

The issue is real, but the adjustment is not easy, and it’s not just a matter of when someone “reads” the thermometer, but of when someone resets the 24 hour observation period.

BTW, for the five major temperature monitoring sources, here is how they compare for this decade (since Jan 2000, OLS is ordinary least square slope). You can judge for yourself who the outliers might be.

……………………………………..GISS…………NOAA……….HadCRUT3………..UAH………RSS

OLS (deg C per decade)……………..0.11…………0.11…………..0.02……………0.04………..0.03

You have two typos in your post–Anthony Watts last name is Watts not Watt.

Keep up the great work.

jen-

what are we to make of this result?

http://www.woodfortrees.org/plot/gistemp/from:2001/to:2009/trend/offset:0.027/plot/uah/from:2001/to:2009/trend/offset:0.275/plot/hadcrut3vgl/from:2001/to:2009/trend/offset:0.1/plot/rss/from:2001/to:2009/offset:0.225/trend

the GISS looks to be a huge outlier in temperature since 2001. the next closest dataset (hadcrut) shows easily 3 times the cooling. the set showing the most cooling (RSS) shows nearly 5 times as much.

over the last decade, GISS shows a .11 C warming trend. UAH shows .05. RSS shows .03. this also seems to indicate that, in comparison to satellites, GISS has been an outlier for some time.

i have concerns that the OLS straight line plotting of the woodfortrees tool may be masking more than it is showing. the longer term agreement between GISS and RSS is simply not as precise as it shows nor is the divergence of UAH and RSS nearly so great.

this is RSS month data plotted vs UAH. they are very tightly correlated. this is well over .9.

http://www.junkscience.com/MSU_Temps/MSUvsRSS.html

this is a WFT plot of their OLS linear trends:

http://www.woodfortrees.org/plot/uah/trend/offset:0.12/plot/rss/trend/offset:0.15

it implies that RSS has a more than 25% steeper slope of temperature gain than UAH.

looking at the actual data, such a result seems unrealistic.

this is the problem with linear regression.

i also echo the sentiment that trying to measure something as complex as global temperature to within a tenth of a degree is almost certainly well beyond our current technical capabilities. the noise in the system is simply too great to trust a result. other sciences would view data of this quality to be junk.

morg: you seem very confident that the trends are not being reported accurately by woodfortrees.org. Have you checked them yourself? What statistical basis is there fore the statement ” the longer term agreement between GISS and RSS is simply not as precise as it shows nor is the divergence of UAH and RSS nearly so great”?

As for temperatures since 2001, if you look at very short periods of time, you do not get a solid idea of the long term trend, obviously. On a whim I plotted 1990 – 1992: graph. Which one is the outlier there? You want to never ever use again the dataset that gives the highest warming trend? Stick with the ones that show the cooling trend you seem so sure is actually there?

morg: you seem very confident that the trends are not being reported accurately by woodfortrees.org. Have you checked them yourself? What statistical basis is there fore the statement ” the longer term agreement between GISS and RSS is simply not as precise as it shows nor is the divergence of UAH and RSS nearly so great”?

As for temperatures since 2001, if you look at very short periods of time, you do not get a solid idea of the long term trend, obviously. On a whim I plotted 1990 – 1992: graph. Which one is the outlier there? You want to never ever use again the dataset that gives the highest warming trend? Stick with the ones that show the cooling trend you seem so sure is actually there?

I have no faith in satellite data because they still do not have the ability to representatively sample the globe/atmosphere. Same goes for sea based stations. There’s the whole issue of instrumentation accuracy and precision and calibration. All these devices have these problems but the big problem is gaining a representative sample/samples and you can’t do it. Anyway, that today. Think about 100 years ago. We are comparing all the data today against data from a time when istrumentation is poor, documentation was poor, no computers existed to collect or trck data, etc. I can imagine the temperatures reported from Arizona back then. Some coyboy read the thermometer for some official, didn’t write it down, by the time he got back after riding his horse, he forget and made up a number. Reality probably isn’t very far from something like this. I guess temperature records were offical since 1880 or something but something tells me they operated under a different definition of offcial back then.

I think it is good for the skeptics to reanalyze all these data to show how the advocates mislead but the skeptics should always note that the data sets are unreliable to begin with.

Folks: Some WFT guidance: doing a normalise before a trend is a really bad idea – normalise scales the series into a unit range, so any trend will be similarly scaled!

Please see the notes at: http://www.woodfortrees.org/notes#trends

I agree with Jennifer that UAH has a significantly lower trend (approx 0.13K/decade) than the others (around 0.16K/decade) – why that should be I leave others to work out…

Like any mathematical tool, this one can be deceptive. If one does not understand what is going on ‘under the hood’, one cannot reliably interpret the results. For example, see:

http://www.woodfortrees.org/plot/gistemp/from:1979/to:2009/mean:90/offset/trend/plot/uah/mean:90/offset:0.25/trend/plot/hadcrut3vgl/from:1979/to:2009/mean:90/offset:0.1/trend/plot/rss/mean:90/offset:0.27/trend

If the previous link didn’t work, try this:

MeanOffsetLinearTrend

Well said, Ray. And may I say that your typo is brilliant; for me it’s “globle” warming from now on.

Don’t understand thermometers that cannot record minimum amd maximum. If the readings are recorded daily at say 5:00pm, an intermediate temperature period of the day, then TOO adjustments are not required. Is the actual time of minimum or maximum important? Getting an accurate record is important.

You are going to love this AP article today

http://news.yahoo.com/s/ap/20081214/ap_on_sc/global_warming_obama

“When Bill Clinton took office in 1993, global warming was a slow-moving environmental problem that was easy to ignore. Now it is a ticking time bomb that President-elect Barack Obama can’t avoid.

Since Clinton’s inauguration, summer Arctic sea ice has lost the equivalent of Alaska, California and Texas. The 10 hottest years on record have occurred since Clinton’s second inauguration. Global warming is accelerating. Time is close to running out, and Obama knows it.”

The whole thing is a jaw-dropper.

If you guys are going to do temp comparrisons, you need to compare UAH and GISS because they extrapolate the poles… then you need to campare RSS and HADCRUT because they do not extrapolate the poles.

Richard111,

The hype exemplified in the AP article is misleading and literally untrue.

But it is typical of AGW propaganda: fear mongering, manipulating the causal reader to demand instant action, and unable to withstand any sort of critical scrutiny at all.

Ray – I think I’ll expand on what your said.

It appears your words have fallen on deaf ears. (eyes?) This instrumentation issue seems to nearly impossible to get across.

It is wrong technically to attempt to find a long term measured value trend smaller than the accuracy of the instruments doing the measurement. Our current scientific weather instruments have a typical calibration accuracy of plus or minus one half degree. That means, in this case, that if we take the instrument back to the calibration lab within some reasonable time frame (six months? a year?) it should be within that range. It may have drifted up or down half a degree or more during that time span and still be within specs.

It is also wrong technically to assume that instrument calibration accuracy carries through to the final measurement. How and where the instrument connects to the process to be monitored matters even more. This is a critical engineering issue in facilities such and power plants and manufacturing plants. Careful location of sensors (and their thermal wells if needed) to make sure they measure what is desired and not in some non-representative area. It would be unlikely to expect a process (air temperature) measurement accuracy under ideal circumstances to be more accurate than about plus or minus one degree for our weather instruments.

What about modern signal analysis programs? Can’t they find extremely weak radio signals buried in tons of noise? Isn’t the analysis of weather temperatures the same thing? Nope, not by a big measure. First of all, instrument bias and drift cancel out in analyzing weak radio signals. Radio signals are sampled over many, many repeated cycles looking for self correlation from cycle to cycle. 20th and 21st century weather analysis, on the other hand is looking for trends much longer than the measurement periods involved, not repeated cycles of signal.

What does this all mean from a technical perspective. No long term trend should be claimed unless it is at least three times the instrument accuracy or in this case, plus or minus three degrees. Preferably, the trend should be ten times the instrument accuracy. Trends claimed below these values are indistinguishable from instrument bias and drift.

For those folks who believe otherwise, please go visit the folks at a calibration lab and have them explain what is needed to make accurate measurements.

Richard111,

“If the readings are recorded daily at say 5:00pm, an intermediate temperature period of the day, then TOO adjustments are not required. Is the actual time of minimum or maximum important? Getting an accurate record is important.”

Again, I recommend the links I posted earlier. But consider your example. It would not be unusual for the high of the day to occur around 5 o’clock. Say it’s a very warm day, 94 deg F. Our dutibound temperature recorder trudges out to the weather station, takes his/her reading and resets the thermometer. The next day is not as warm, but guess what the the 24 hour high is…94 deg F. Now let’s say the day is unusually cool…68 deg F. If the next day is warmer, the next day’s temperature will correctly be recorded as the next day’s high (assuming the temperature doesn’t keep rising after 5 o’clock).

If the routine is to reset the temperature every day at 5 o’clock, there will be many days where an erroniously high temperature reading will be recorded for the next day. There will be virtually none where an erroniously low reading will be recorde. If your example was 10 PM or 10 AM, you would be closer to the “intermediate” temperature you posited, but 5 PM is too close to most daily highs to be considered “intermediate”.

As I said earlier, the effect is real. Quantifying it is very difficult.

Well if you account for the fact that the lower troposphere warms 1.2 times as much as the surface, then you get a different story. UAH obviously still shows the least warming, while RSS lies half way between. However, it is clear that both the satellites show less warming than the surface metrics do.

Sorry, here’s the graph:

http://www.woodfortrees.org/plot/hadcrut3vgl/from:1979/trend/offset:-0.15/plot/rss/scale:0.8333333/trend/plot/gistemp/from:1979/trend/offset:-0.24/plot/uah/from:1979/scale:0.8333333/trend

@Ray,

[citation]

….Think about 100 years ago. We are comparing all the data today against data from a time when istrumentation is poor, documentation was poor, no computers existed to collect or trck data, etc. I can imagine the temperatures reported from Arizona back then. Some cowboy read the thermometer for some official, didn’t write it down, by the time he got back after riding his horse, he forget and made up a number….

[/citation]

Ray, I somehow can’t agree:

“at that time instrumentation is poor” – nowadays it’s sometimes poor, too – and very often in poor locations.

“documentation was poor” – nowadays documentation – sometimes – poor, too.

“Some cowboy read….” – I’m not from U.S., I’m from Western Europe, but I did a work on the live

of the real cowboys – not the ones in the movies – in my last year of school (114 pages). I did more on that in the following 4 to 5 years – it was an amazing theme.

– Cowboys had usually a very high working ethic.

– Cowboys were often very astute in weather forecasting. Weather was something which could influence

success/failure of their work, could even make their live dangerous. Don’t expect that these guys did be sloppy with documentation about weather. In fact, I do know of ranches in the west, taking notes on nearly everything, like the logbooks on ships. The remembering of cowboys was exptremely well. Compared to them, we would look like Alzheimers.

– Funny: A plain cowboy did know of and did use nearly 4 times as much different knots than an ordinary seaman on a sailing vessel. (my personal knowledge from different knots – only workink knots, not the fancy knots: from seamen (about 40), from cowboys (over 150)

Jennifer, are you claiming that thesetwo series display vastly different trends?

You should aquint yourself with some signal analysis tools.

Best,

Speaking of satellites, UAH MSA and RSS MSA are highly convergent even to the smallest blips in their data, which is an indication that something real is being measured. And they can be compared point for point with land-based data whenever monthly values are available.