A while back I wrote about a number of climate forecasts (e.g. for 2007 hurricane activity) wherein we actually came in in the bottom 1% of forecasted outcomes. I wrote:

If all your forecasts are coming out in the bottom 1% of the forecast range, then it is safe to assume that one is not forecasting very well.

Well, now that the year is over, I can update one of those forecasts, specifically the forecasts from the UK government Met office that said:

- Global temperature for 2007 is expected to be 0.54 °C above the long-term (1961-1990) average of 14.0 °C;

- There is a 60% probability that 2007 will be as warm or warmer than the current warmest year (1998 was +0.52 °C above the long-term 1961-1990 average).

Playing around with the numbers, and assuming a normal distribution of possible outcomes, this implies that their forecast mean is .54C and their expected std deviation is .0785C. This would give a 60% probability of temperatures over 0.52C.

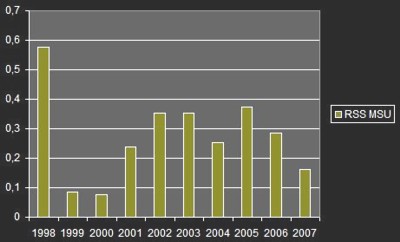

The most accurate way to measure the planet’s temperature is by satellite. This is because satellite measurements include the whole globe (oceans, etc) and not just a few land areas where we have measurement points. Satellites are also free of things like urban heat biases. The only reason catastrophists don’t agree with this statement is because satellites don’t give them the highest possible catastrophic temperature reading (because surface readings are, in fact, biased up). Using this satellite data:

and scaling the data to a .52C anomaly in 1998 gets a reading for 2007 of 0.15C. For those who are not used to the magnitude of anomalies, 0.15C is WAY OFF from the forecasted 0.54C. In fact, using the mean and std. deviation of the forecast we derived above, the UK Met office basically was saying that it was 99.99997% certain that the temperature would not go so low. Another way of saying this is that the Met office forecast implied 2,958,859:1 odds against the temperature being this low in 2007.

What are the odds that the Met office needs to rethink its certainty level?